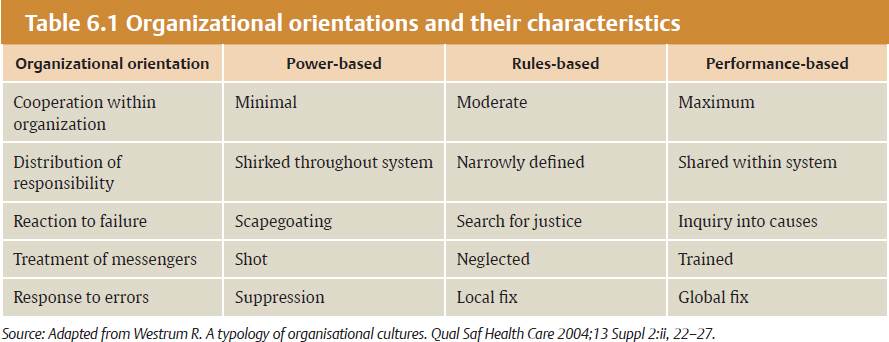

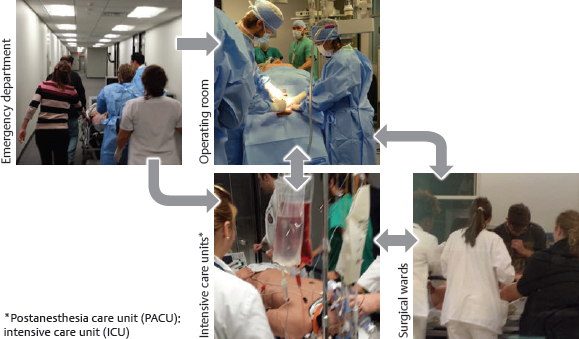

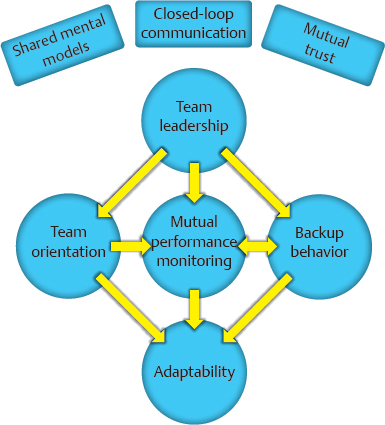

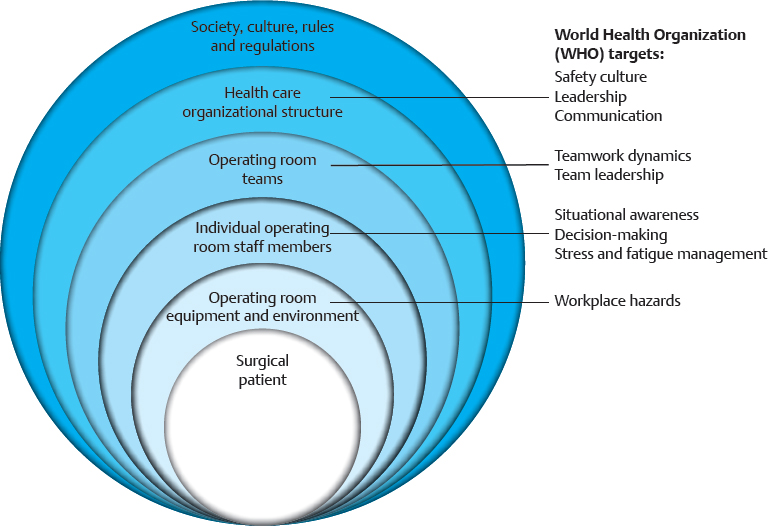

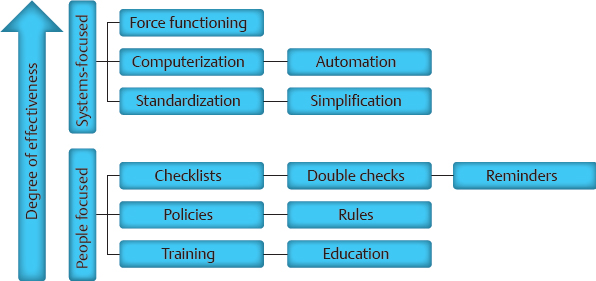

CHAPTER The operating room (OR) is a complex, dynamic work environment in which multiple professions must interact with each other and complicated technologies in a seamless, efficient manner to ensure safe, effective patient care. Such a workplace is rife with potential problems and failings that can negatively impact the delivery of surgical treatments. These issues are magnified when the interaction of the OR with surrounding care units (e.g., the intensive care unit [ICU], the postanesthesia care unit [PACU], the emergency department [ED]) and the requirements of specialized surgical specialties such as plastic surgery are taken into consideration. Thus the OR and its teams must function like the proverbial “well-oiled machine” to provide proper care of the patient. How does an OR become such a “well-oiled machine” in which plastic surgical patients receive excellent care consistently? This chapter reviews key concepts related to bringing such high reliability to the OR and its affiliated care environments by addressing the following objectives: 1. Defining essential features of high reliability organizations (HROs) 2. Discussing the role of human factors (HF) in promoting safe surgical care 3. Applying HF concepts to improve surgical teamwork and care for patients undergoing plastic surgical interventions OR safety does not just “happen.” At its core, it is the result of creating and maintaining the appropriate culture and adaptability at the organizational level to ingrain key behaviors and attitudes into the involved individuals so safety supersedes all other concerns. In such a culture of safety, all necessary resources are devoted to support safety; an openness regarding recognition of errors and problems exists; communication is candid and frequent within and between all levels of the organization; and learning and improvement within the organization is encouraged.1 Attaining this cultural state, however, is not a given. Organizations, like individuals, have different orientations and depending on which one they possess are more or less predisposed to adopting cultural traits promoting safety as the primary priority2 (Table 6.1). A power-based (pathological) organizational culture is poorly equipped to adequately address safety concerns, whereas a rules-based (bureaucratic) one often is too caught up in its regulations to adapt as needed. It is the performance-based (generative) organizational culture that is best suited to promote safety first in a consistent and effective manner. Getting to this generative stage from one of the two former cultural states is an arduous and prolonged process, because cultural change requires tilting the deep-seated assumptions within the organization that form the basis of how it functions to shift its values and behaviors. Such transformation can often take a decade or more and necessitates using an established change process strategy in a coordinated, concerted manner. Although difficult, successful cultural change can be dramatic in nature, as evidenced when sports teams transform from perennial losers into consistent winners. Thus the ultimate goal for attaining safety in health care is to create HROs that base their activities on performance and adaptability. HROs are common in other dynamic, high-risk industries in which lapses and errors can lead to catastrophic events. Civil and military aviation, nuclear energy, offshore oil drilling, chemical production, and space exploration are several examples. Organizations within such industries, however, are not immune from problems if HRO principles are not followed. The Deep Water Horizon explosion and oil spill and the radiation leak at the Fukushima Daiichi Nuclear Power Plant are two relatively recent events emphasizing this point.3,4 HROs are characterized by key operational principals and attitudes that allow them to perform consistently and safely in high-hazard environments. Foremost among them is a preoccupation with failure. They are constantly looking for weaknesses in their systems in an attempt to find them before they surface on their own. Thus HROs show a sensitivity to operations and a reluctance to simplify interpretations of events or problems to ensure issues are not missed. In addition, within such organizations, individuals are much more inclined to demonstrate a deference to expertise in lieu of seniority or rank. All these elements combine to allow the HRO to have a commitment to resilience when faced with rapidly changing, high-risk situations that permits the adaptability necessary to prevent catastrophic events.5 Distilling the principles of an HRO down to a single, encompassing concept can be challenging, but it is useful to help convey the attitudes and behaviors needed within an organization. Weick and Sutcliffe’s5 emphasis that HROs promote “mindfulness” in lieu of “mindlessness” is one such effective distillation of what is necessary. Sutcliffe6 expanded on this concept by pointing out that HROs are reliability-seeking entities rather than reliability-achieving ones. Thus they strive to have high effectiveness in function within high-risk environments. Unfortunately, HROs are rare in health care. This fact was brought home a decade ago by the study by Singer et al1 of California hospitals in which hospital staff and administration completing a survey on safety demonstrated problematic responses among almost one fifth of the clinicians and approximately one sixth of senior managers. Overall, almost one in five respondents gave problematic answers, far from the less than 10% frequency required to qualify as a culture of safety. A follow-up study by Gaba et al7 comparing safety issues between naval aviators and hospital staff illustrated the difference between the two professions. Whereas only 1 in 20 naval aviators gave problematic answers to the questions on their safety survey, 1 in 5 hospital staff in high-risk departments (e.g., OR, ED) did so. Regrettably, a more recent look at urology trainees in western Scotland by Geraghty et al8 revealed that the issue persists today. Almost half of these trainees felt that safety was the least important item in the hospitals in which they worked. As alluded to previously, changing the culture of an entire organization can be daunting. Fortunately, such change can be attained at the divisional or frontline level of an organization, thus creating pockets in which safety is the primary priority. Such news is good for an industry such as health care thanks to the many characteristics cited as conditions that make it unlikely to achieve HRO status: the diversity of tasks and outcomes, the vulnerability of the patients being treated and the uncertainty of their outcomes, the numerous activity patterns, and the lack of regulation.9 Thus a clinical microsystem within a hospital or health care entity (i.e., a group of health care professionals working together with a shared clinical purpose to provide care to a defined patient population) can function like an HRO. The OR is such a microsystem, and, as discussed previously, it interacts with other clinical microsystems to form a perioperative microsystem or service line within a particular health care entity (Fig. 6.1). It, or the larger perioperative microsystem within which it is housed, can therefore be targeted for HRO functioning. Like HROs, well-functioning clinical microsystems demonstrate characteristics of mindfulness over mindlessness, including constancy of purpose, investment in improvement, role alignment, care team interdependence to meet patient requirements, technology and information workflow integration, continuous measurement of outcomes, larger organizational support (if it is an HRO), and strong community relations.10 Whether at the organizational or microsystem level, however, attaining HRO-like functioning requires an understanding and implementation of HF principles and techniques, discussed in the next section. HRO concepts and function have grown out of a field of study little known to clinicians in the health care industry: HF. As its name implies, the study of HF focuses on the interaction of humans with their environment. In the typical workplace, this environment can consist of the technology with which the individual works, the processes and procedures of the system, and the interaction of each individual with others in the teams in which they work. Clearly, the OR and perioperative microsystem are environments in which these interactions of technology, teams, and processes are important. HF engineering is the application of HF theories to the real world. Its goal is to design systems and devices for safe, effective use by humans.11 To achieve this goal, HF engineers attempt to optimize the interaction of technology, teams, and processes by learning about human behaviors, abilities, and limitations.11 Understanding human limitations arises from one of the foundational axioms of HF: human error is inevitable, and as a result, an error-free system cannot be created.12 Defenses-in-depth therefore are needed to minimize the negative impact of an error. Another important belief is, as Cafazzo and St-Cyr13 have stated so eloquently, “a fundamental rejection of the notion that humans are primarily at fault when making errors in the use of a sociotechnical system.” James Reason14 popularized this notion of multiple circumstances coming together to cause a catastrophic event with his concept of the Swiss cheese model of error production in complex systems. In this model, unknown or unnoticed weaknesses in the defensive systems within an organization, known as latent conditions, can align with one another or with active failures produced by actions and decisions of individuals to lead to the catastrophic event. The holes in the layers of defense line up like those in Swiss cheese to result in an adverse outcome. Sadly, health care has too many examples of so-called “Swiss cheese in action,” and the OR is often at the forefront of high-profile events.15,16 Thus the goal of HF engineering is to create systems that are efficient in avoiding, trapping, and mitigating threats and errors.17 In other words, they help individuals first identify and avoid potential threats, then identify and correct current threats, and finally identify and limit the damage of errors that do occur.17 Ultimately, HF engineers want to create systems and processes that elicit desired human behaviors to mitigate errors (i.e., shape human behavior).13 In doing so, they can use systems-focused (i.e., technologic) or people-focused (i.e., human-centered) approaches. Although the systems-focused strategies tend to be more effective in general, people-focused strategies are necessary to allow the positive impact of human judgment to remain.13 Decreasing complexity, standardization, intelligent automation, computerization, optimization of information processing, and force functioning are all examples of technologic, systems-focused solutions to reduce error.13,18 Of these, force functioning, or the imposition of physical constraints, is the most effective. Examples of such physical constraints are encountered every day, from the inability to fit a diesel nozzle into the tank of a car that requires unleaded gasoline to different-shaped plugs for the power and video cords on a computer. People-focused interventions include the use of checklists, double checks, and reminders; policies and procedures (i.e., procedural constraints); and education and training.13 Whatever intervention is undertaken by HF engineers, they must always keep in mind that unwanted consequences can occur from the implementation of new strategies, and they must work to prevent them.18 As mentioned previously, one of the most powerful constraints that can be employed to trap and mitigate errors in a system is a culture of safety. Positive and negative examples of the impact of organizational culture on safety abound. The nuclear aircraft carrier USS Carl Vinson provides a positive one. In this dynamic environment, losing anything on the flight deck can result in catastrophic outcomes if the lost item is sucked into an engine of a jet. Thus when a seaman once lost his wrench and reported it, all planes were diverted from the carrier, and the flight deck was searched until the lost item found. The next day, the seaman was recognized and rewarded for reporting his error at a formal ceremony.5 The saga of British Petroleum (BP) provides a negative example. Although it has branded itself as the environmental company, its focus on profit over safety has resulted in a series of environmentally devastating events: the Texas City Refinery explosion,19,20 the Prudhoe Bay trans-Alaska pipeline oil spill,20 and the aforementioned Macondo well explosion and oil spill in the Gulf of Mexico.3,20 The lynchpin to creating an HRO and promoting a culture of safety is having highly reliable teams of individuals within the organization to carry out its function.21 Without such teams, maintaining the communication and resilience essential for HRO operation is severely limited. Salas et al22 have identified five key team traits that, with three coordinating mechanisms, are consistently found in highly reliable teams in high-risk industries such as the military and aviation. These traits interact with the coordinating mechanisms and each other to produce teams that are able to show resilience and adaptability in high-stress, rapidly changing environments22,23 (Fig. 6.2). This Big Five Model of Teamwork serves as the foundation of the Team Strategies and Tools to Enhance Performance and Patient Safety (STEPPS) developed by the Department of Defense in coordination with the Agency for Healthcare Research and Quality.24 As with a culture of safety in health care, highly reliable team function is lacking, especially in the OR. A silo mentality combined with tribalism promotes multiprofessional interaction in lieu of true interprofessional teamwork.25 This situation is exacerbated by unwanted hierarchical structures,26,27 role confusion,28,29 differing perceptions of teamwork,30,31 and poor communication32 to undermine team interaction. The lack of effective communication is especially troublesome because of its resultant confusion. As with the famous Abbott and Costello “Who’s on First?” routine, members of the OR speak the same language but do not understand one another. OR personnel suffer from information misunderstanding and information confusion. In addition, information in the OR is often given too early or late, ambiguous messaging occurs (e.g., hinting at a problem and hoping the person gets it), or information is not heard by all members. Finally, ineffective communication occurs throughout the entire surgical care process,33 negatively affecting patient care.34,35 Fig. 6.2 The Big Five Model of Teamwork. Adapted from Salas E, Sims DE, Burke CS. Is there a big five in teamwork? Small Group Res 2005;36:555–599 The result of this ineffective teamwork is tension leading to disruptive behavior and distractions.36,37 These issues combine to have a negative impact on patient care. Poor teamwork in the OR has been shown to increase technical errors,38 lead to higher morbidity and mortality,39 decrease patient safety,40 and be a major factor in sentinel events.41 Clearly, especially in the OR setting, effective teamwork is an essential component for providing proper care to surgical patients. By taking an HF engineering approach to the OR and the perioperative microsystem, a multiprong, comprehensive strategy can be used to promote HRO principles and function and allow the provision of safe, effective surgical care. From an HF perspective, the care of the surgical patient is set within multiple spheres of influence involving all levels of an organization and society in general, each of which can be targeted for interventions to promote patient safety42 (Fig. 6.3). The World Health Organization (WHO) has identified 10 key HF topics within these various spheres of influence as the most relevant to target for intervention and has delineated various tools to help with both measuring and implementing them42 (Table 6.2). Although the tools and measures suggested by the WHO are far from comprehensive, they emphasize the importance of measurement to gauge the effectiveness of any changes attempted. Without accurate (i.e., valid and reliable) methods of measurement and assessment, progress cannot be gauged appropriately.

6

Bringing High Reliability to the Operating Room

Organizational Culture and Adaptation

Human Factors Study and Team Function

Applying Human Factors Engineering to Promote Safe, Effective Surgical Care

Human factors topics for promoting patient safety | Tools and measurements |

Organizational/managerial level | |

Safety culture | Hospital Survey on Patient Safety Safety Attitudes Questionnaire Manchester Patient Safety Assessment Framework |

Managerial leadership | Multifactor Leadership Questionnaire Authentic Leadership Questionnaire Leadership Practices Inventory “Seeing Yourself as Others See You” NHS Leadership Qualities Framework Leadership Guide to Patient Safety Leadership Checklist for NHS Chief Executives Patient Safety Walk Rounds Guide and Data Collection Tool |

Communication | DOD Handover Tools “Do Not Use” List Safety Briefing Tool and SBAR Tool Safe Handover Surgical Safety Checklist DASH Debriefing Tool Team Self Review Debriefing |

Teamwork dynamics (structure and processes) | Team Climate Assessment Measurement Team Self Review Team STEPPS Teamwork Attitudes Questionnaire Anesthetists’ Nontechnical Skills Nontechnical Skills for Surgeons Nontechnical Skills for Theatre Nurses Observational Teamwork Assessment for Surgery Oxford NOTECHS Revised NOTECHS |

Team leadership | Situational Leadership Leadership Opinion Questionnaire Perceptions of Supervisory Behaviours for Safety |

Individual level (cognitive skills) | |

Situation awareness | Situation Awareness Global Assessment Technique Situation Awareness Rule of Three |

Decision making | Questionnaire for Decision Making Styles Cognitive Task Analysis Tactical Decision Games |

Individual level (personal resources) | |

Stress | HSE Stress Indicator Tool NIOSH Occupational Stress Information and Stress Questionnaire |

Fatigue | Fatigue Avoidance Scheduling Tool Fatigue and Risk Index |

Work environment level | |

Workplace hazards | Root Cause Analysis Toolkit Root Cause Analysis Framework Healthcare Failure Mode and Effects Analysis Failure Mode and Effects Analysis Fault Tree Job Hazard Analysis Probabilistic Risk Assessment |

Abbreviations: DASH, Debriefing Assessment for Simulation in Healthcare; DOD, Department of Defense; HSE, health and safety executive; NHS, National Health Service; NIOSH, National Institute for Occupational Safety and Health; NOTECHS, nontechnical skills; SBAR, Situation, Background, Assessment, Recommendation; STEPPS, Strategies and Tools to Enhance Performance and Patient Safety.

Source: From Methods and Measures Working Group of WHO Patient Safety. Human Factors in Patient Safety: Review of Topics and Tools. World Health Organization; 2009.